- Today

- Holidays

- Birthdays

- Reminders

- Cities

- Atlanta

- Austin

- Baltimore

- Berwyn

- Beverly Hills

- Birmingham

- Boston

- Brooklyn

- Buffalo

- Charlotte

- Chicago

- Cincinnati

- Cleveland

- Columbus

- Dallas

- Denver

- Detroit

- Fort Worth

- Houston

- Indianapolis

- Knoxville

- Las Vegas

- Los Angeles

- Louisville

- Madison

- Memphis

- Miami

- Milwaukee

- Minneapolis

- Nashville

- New Orleans

- New York

- Omaha

- Orlando

- Philadelphia

- Phoenix

- Pittsburgh

- Portland

- Raleigh

- Richmond

- Rutherford

- Sacramento

- Salt Lake City

- San Antonio

- San Diego

- San Francisco

- San Jose

- Seattle

- Tampa

- Tucson

- Washington

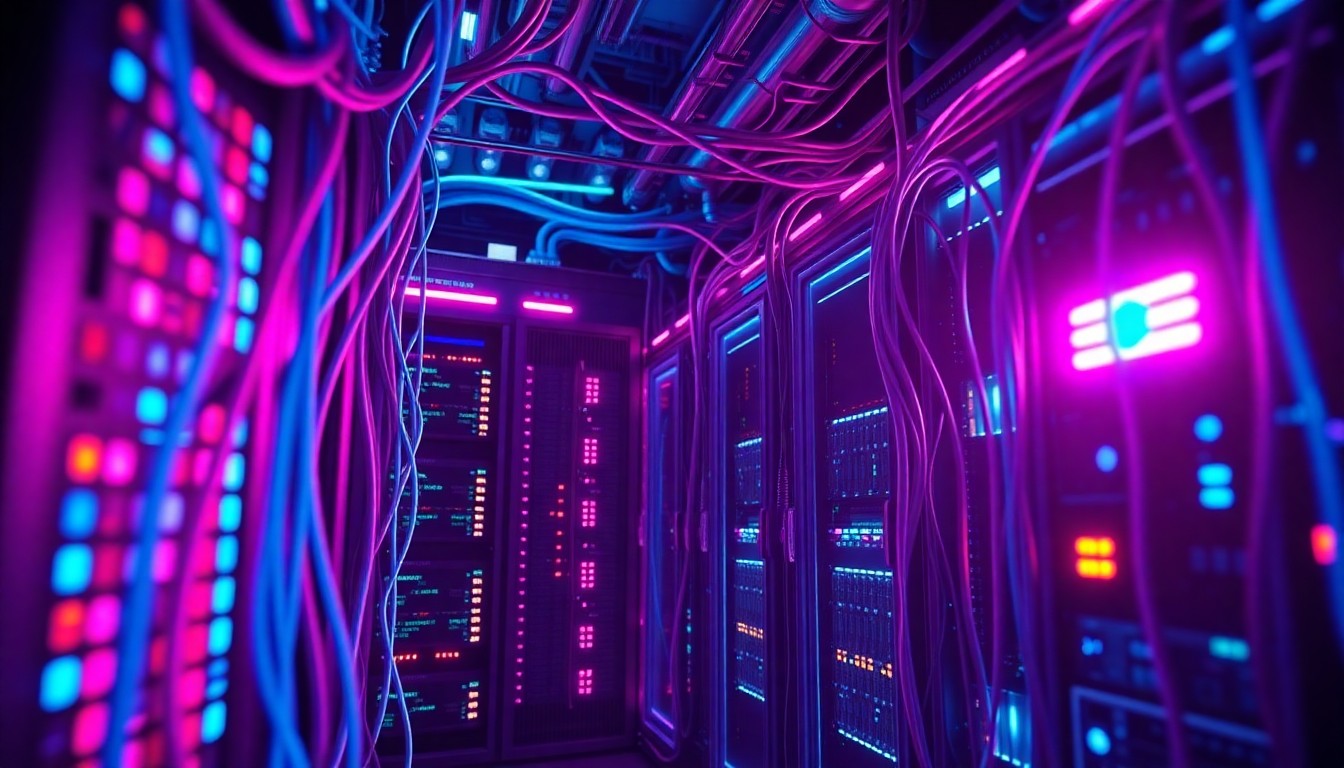

AI Cybersecurity Threats Spur Calls for Regulation

Experts warn of rising risks to personal data, economy, and national security as AI-powered cyberattacks escalate.

Apr. 17, 2026 at 7:56pm

Got story updates? Submit your updates here. ›

As AI-powered cyberattacks escalate, experts warn of growing risks to personal data, the economy, and national security if government and business leaders fail to establish clear regulations.Denver Today

As AI-powered cyberattacks escalate, experts warn of growing risks to personal data, the economy, and national security if government and business leaders fail to establish clear regulations.Denver TodayAs new agentic AI models continue to emerge, cybersecurity experts warn that the capabilities of these systems could be exploited by bad actors to hack systems, putting personal data, the economy, and national security at risk. Experts say it's time for business and government leaders to regulate the technology before it's too late, as AI-powered cyberattacks have seen a 44% year-over-year increase, including a high-profile breach at Anthropic. Discussions at the Berkman Klein Center highlight the challenges of defining liability and mandating security measures against AI-driven threats, as the cybersecurity landscape continues to rapidly evolve.

Why it matters

The growing capabilities of agentic AI models pose significant risks if misused by cybercriminals, who can leverage these systems to more effectively target vulnerabilities, craft sophisticated phishing attacks, and potentially even 'hack back' against companies. Experts argue that without clear regulation and security standards, these AI-powered threats could spiral out of control and compromise personal data, the economy, and national security.

The details

Cybersecurity experts warn that the attributes that make agentic AI models useful tools in the fight against cybercrime - their ability to quickly and autonomously sift through vast amounts of data - could also be exploited by bad actors. Recent data from IBM shows a 44% year-over-year increase in cyberattacks aimed at public-facing software and systems applications, many of which utilized AI. High-profile incidents include the November data breach of Anthropic, where attackers used their own AI models to scan for weaknesses in the company's source code. Experts also note that phishing attacks have become more sophisticated, with AI-generated messages that no longer contain obvious red flags like misspellings or unnatural language.

- In November 2026, Anthropic suffered a data breach where attackers used AI models to target vulnerabilities.

- A 2026 IBM study found a 44% year-over-year increase in AI-powered cyberattacks on public-facing software and systems.

The players

James Mickens

Gordon McKay Professor of Computer Science.

Robert Knake

Partner at Paladin Capital, a cyber-venture capital group, and former deputy national cyber director for strategy and budget in the Office of the National Cyber Director at the White House from 2022 to 2023.

Josephine Wolff

Associate dean for research and professor of cybersecurity policy at the Fletcher School at Tufts University.

What they’re saying

“The unfortunate thing is that the bad people only have to win once in some sense, whereas the defenders have to win all the time. To me, at least, that's a concerning aspect of what it means to think about agentic cyber security, attacks and defenses.”

— James Mickens, Gordon McKay Professor of Computer Science

“A year ago, we still had email messages in our inbox that had misspellings that were not colloquial English, that were easy to identify if you were vigilant. Now, all those signals are gone.”

— Robert Knake, Partner at Paladin Capital

“Documentation and inventories are both really important and really hard. Can you inventory all of the code that's running on your computers so that if there's a vulnerability, if something goes wrong, you can at least know where you need to look?”

— Josephine Wolff, Associate dean for research and professor of cybersecurity policy at the Fletcher School at Tufts University

What’s next

The federal government is expected to consider new regulations that would require the private sector to take greater steps to prevent AI-powered cyberattacks that jeopardize consumer and national safety. Discussions are ongoing about defining liability, mandating security measures, and verifying online identities to combat the evolving threat landscape.

The takeaway

As agentic AI models become more advanced and accessible, the risks of these systems being exploited by cybercriminals are growing exponentially. Experts agree that decisive action from government and business leaders is urgently needed to establish clear regulations, security standards, and liability frameworks to mitigate the threat of AI-powered cyberattacks that could compromise personal data, the economy, and national security.

Denver top stories

Denver events

Apr. 17, 2026

Colorado Rockies vs. Los Angeles DodgersApr. 17, 2026

Cory WongApr. 17, 2026

Last Call: Cinthie