- Today

- Holidays

- Birthdays

- Reminders

- Cities

- Atlanta

- Austin

- Baltimore

- Berwyn

- Beverly Hills

- Birmingham

- Boston

- Brooklyn

- Buffalo

- Charlotte

- Chicago

- Cincinnati

- Cleveland

- Columbus

- Dallas

- Denver

- Detroit

- Fort Worth

- Houston

- Indianapolis

- Knoxville

- Las Vegas

- Los Angeles

- Louisville

- Madison

- Memphis

- Miami

- Milwaukee

- Minneapolis

- Nashville

- New Orleans

- New York

- Omaha

- Orlando

- Philadelphia

- Phoenix

- Pittsburgh

- Portland

- Raleigh

- Richmond

- Rutherford

- Sacramento

- Salt Lake City

- San Antonio

- San Diego

- San Francisco

- San Jose

- Seattle

- Tampa

- Tucson

- Washington

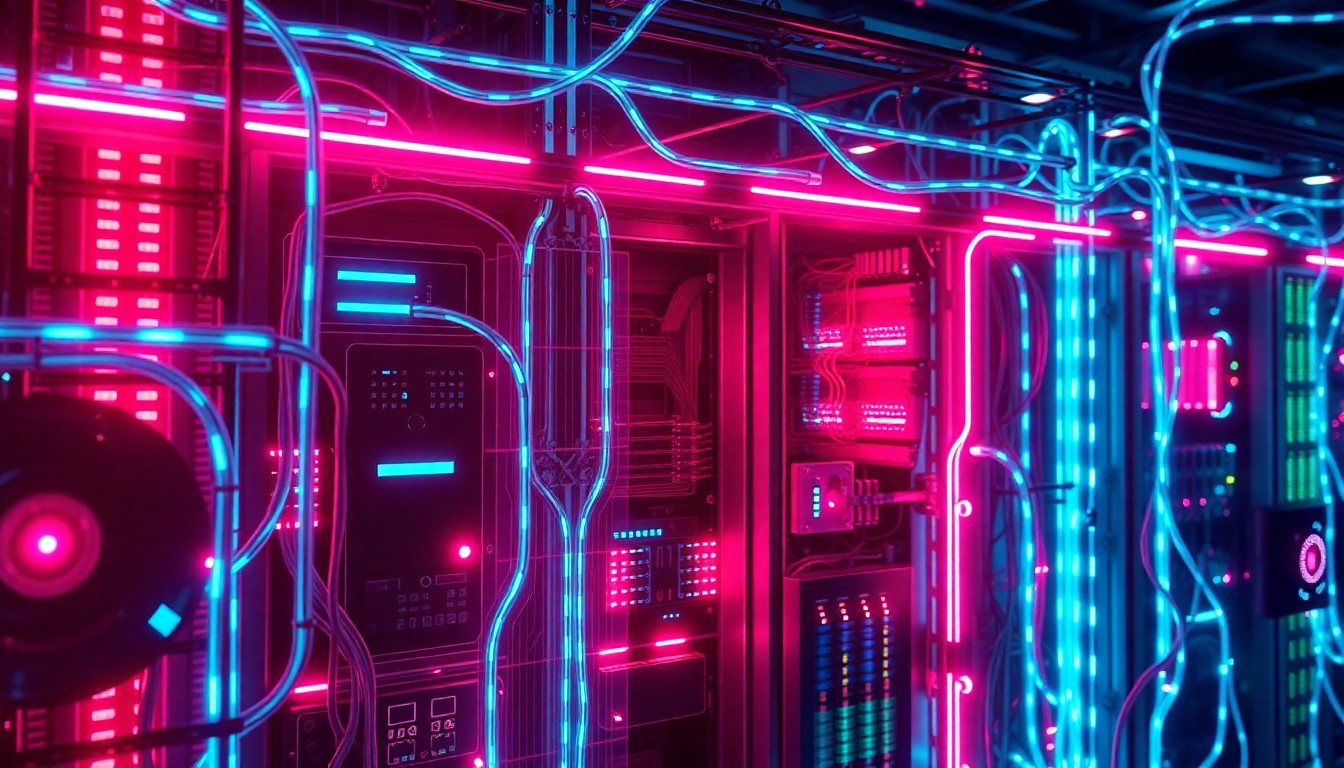

Privacy-Led UX Becomes Key for AI Adoption, MIT Report Finds

Organizations must rethink data consent to build trust and scale AI responsibly, according to new research.

Apr. 15, 2026 at 6:35am

Got story updates? Submit your updates here. ›

As AI systems become more advanced, organizations must build robust privacy infrastructure to govern agent-generated data flows and deploy AI responsibly.Cambridge Today

As AI systems become more advanced, organizations must build robust privacy infrastructure to govern agent-generated data flows and deploy AI responsibly.Cambridge TodayA new report by MIT Technology Review Insights finds that organizations must shift from one-time data consent interactions to ongoing, trust-based relationships with users to succeed in the AI era. The report, produced in partnership with Usercentrics, a data privacy technology company, highlights how privacy-led user experience (UX) is becoming a prerequisite for AI growth, as consumer data is a core foundation for AI-powered personalization.

Why it matters

As AI systems become more advanced and begin acting on users' behalf, the traditional consent moment may never occur. Governing these agent-generated data flows requires robust privacy infrastructure that goes beyond basic cookie banners. Organizations that establish clear, enforceable privacy and data transparency policies now will be better positioned to deploy AI responsibly and at scale in the future.

The details

The report is based on interviews with industry experts whose work intersects privacy technology, digital marketing, consumer analytics, and trust. Key findings include: 1) Privacy is evolving from a one-time consent transaction into an ongoing data relationship, 2) Privacy-led UX is a prerequisite for AI growth as consumer data is core to AI-powered personalization, and 3) Realizing the advantages of privacy-led UX requires cross-functional collaboration and clear leadership.

- The report was published on April 15, 2026.

The players

MIT Technology Review Insights

The custom publishing division of MIT Technology Review, the world's longest-running technology magazine, backed by the world's foremost technology institution.

Usercentrics

A global leader in data privacy technology that partnered with MIT Technology Review Insights on the report.

Adelina Peltea

The chief marketing officer at Usercentrics.

Laurel Ruma

The global director of custom content for MIT Technology Review Insights.

What they’re saying

“The opportunity is significant. Privacy-led UX doesn't just reduce risk, it builds the kind of trust that compounds. Organizations that get consent right unlock higher opt-in rates, better quality first-party data, and the signal fidelity that makes personalization and AI outputs actually work. In the AI era, trust is not a soft metric. It is the foundation everything else is built on.”

— Adelina Peltea, Chief Marketing Officer, Usercentrics

“Organizations can no longer treat privacy as a compliance checkpoint at the edge of the user experience. Our research shows that privacy-led UX is becoming foundational to how companies build trust, collect meaningful data, and ultimately scale AI systems responsibly.”

— Laurel Ruma, Global Director of Custom Content, MIT Technology Review Insights

What’s next

The report provides a practical framework called the TRUST framework to help organizations get privacy-led UX right, including defining data collection and usage strategies and ensuring UX incorporates data consent with a focus on banner design.

The takeaway

In the AI era, trust is the foundation upon which everything else is built. Organizations that establish clear, enforceable privacy and data transparency policies now will be better positioned to deploy AI responsibly and at scale in the future.