- Today

- Holidays

- Birthdays

- Reminders

- Cities

- Atlanta

- Austin

- Baltimore

- Berwyn

- Beverly Hills

- Birmingham

- Boston

- Brooklyn

- Buffalo

- Charlotte

- Chicago

- Cincinnati

- Cleveland

- Columbus

- Dallas

- Denver

- Detroit

- Fort Worth

- Houston

- Indianapolis

- Knoxville

- Las Vegas

- Los Angeles

- Louisville

- Madison

- Memphis

- Miami

- Milwaukee

- Minneapolis

- Nashville

- New Orleans

- New York

- Omaha

- Orlando

- Philadelphia

- Phoenix

- Pittsburgh

- Portland

- Raleigh

- Richmond

- Rutherford

- Sacramento

- Salt Lake City

- San Antonio

- San Diego

- San Francisco

- San Jose

- Seattle

- Tampa

- Tucson

- Washington

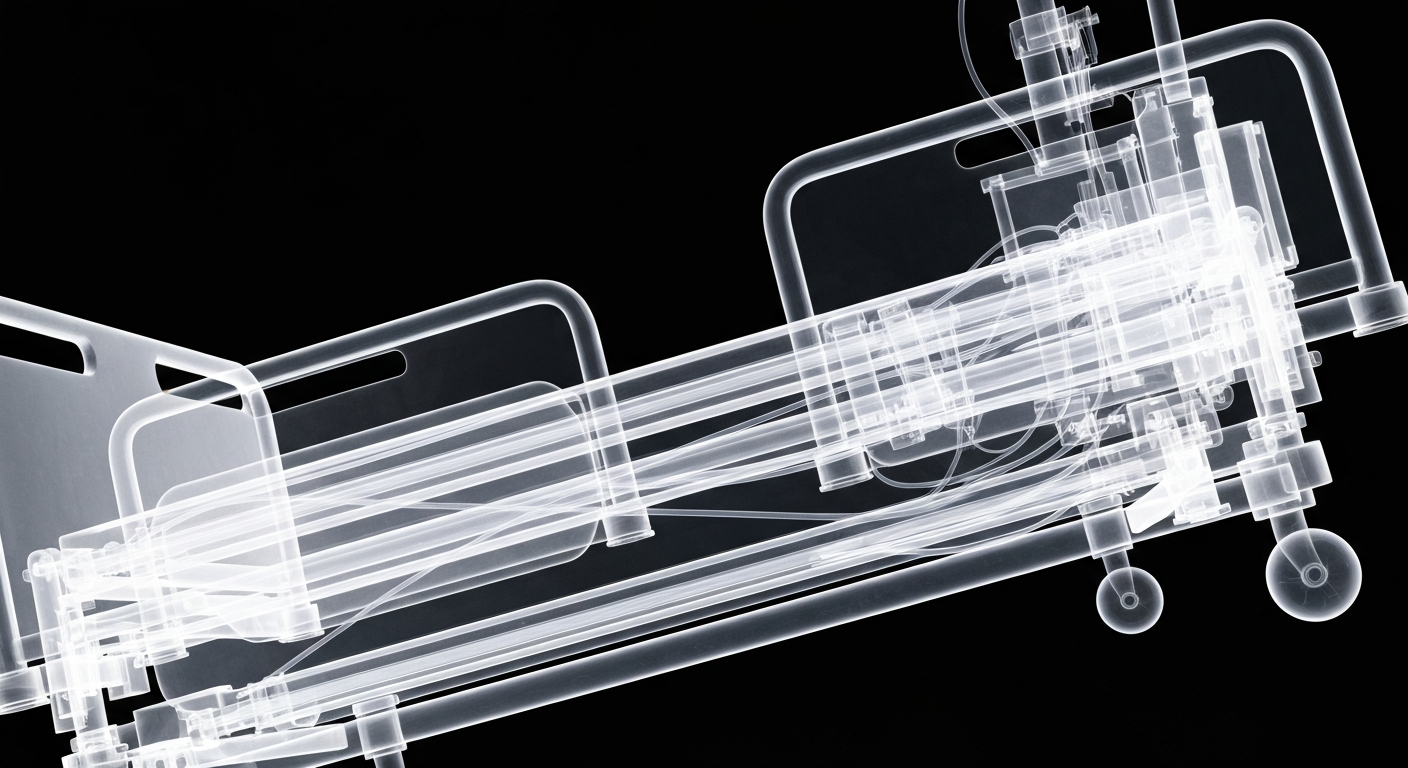

Pentagon Clashes with Anthropic Over Military AI Use Safeguards

Disagreements over surveillance limits and ethical use stall $200 million contract talks.

Jan. 30, 2026 at 11:07am

Got story updates? Submit your updates here. ›

The Pentagon is at odds with artificial intelligence company Anthropic over safeguards that would prevent the government from deploying its technology to target weapons autonomously and conduct U.S. domestic surveillance. This comes following extensive talks under a contract worth up to $200 million, with the U.S. Department of Defense and Anthropic coming to a standstill over Anthropic's position on how its AI tools can be used.

Why it matters

This clash highlights the ongoing tensions between Silicon Valley and the U.S. government over the use of emerging technologies like AI for military and intelligence purposes. It also raises concerns about the ethical implications of deploying AI systems without sufficient oversight and safeguards.

The details

Anthropic representatives had raised concerns during discussions with government officials that its tools could be used to spy on Americans or assist weapons targeting without sufficient human oversight. The Pentagon opposed the tech company's guidelines, saying they should be able to deploy commercial AI technology regardless of companies' usage policies, so long as they comply with U.S. law. Anthropic's models are trained to avoid taking steps that might lead to harm, and Anthropic staffers would be the ones to retool its AI for the Pentagon.

- The contract talks between the Pentagon and Anthropic have been ongoing for an extended period.

- Last year, Anthropic clashed with the White House over the company's support for regulation of AI at the state level, opposing a law that would have preempted state AI regulation.

The players

Anthropic

An artificial intelligence company that has been in discussions with the U.S. Department of Defense over a contract worth up to $200 million.

U.S. Department of Defense

The federal agency that has been in contract negotiations with Anthropic over the use of the company's AI technology for military and national security purposes.

What they’re saying

“Anthropic said its AI is 'extensively used for national security missions by the U.S. government and we are in productive discussions with the Department of War about ways to continue that work.'”

— Anthropic

“The Pentagon opposed the tech company's guidelines, saying they should be able to deploy commercial AI technology regardless of companies' usage policies, so long as they comply with U.S. law.”

— Pentagon

What’s next

The judge in the case will decide on Tuesday whether or not to allow Walker Reed Quinn out on bail.

The takeaway

This case highlights growing concerns in the community about repeat offenders released on bail, raising questions about bail reform, public safety on SF streets, and if any special laws to govern autonomous vehicles in residential and commercial areas.

Minneapolis top stories

Minneapolis events

Apr. 7, 2026

Minnesota Twins vs Detroit Tigers: Magnetic ScheduleApr. 7, 2026

Chameleons - Arctic Moon TourApr. 7, 2026

Gwar