- Today

- Holidays

- Birthdays

- Reminders

- Cities

- Atlanta

- Austin

- Baltimore

- Berwyn

- Beverly Hills

- Birmingham

- Boston

- Brooklyn

- Buffalo

- Charlotte

- Chicago

- Cincinnati

- Cleveland

- Columbus

- Dallas

- Denver

- Detroit

- Fort Worth

- Houston

- Indianapolis

- Knoxville

- Las Vegas

- Los Angeles

- Louisville

- Madison

- Memphis

- Miami

- Milwaukee

- Minneapolis

- Nashville

- New Orleans

- New York

- Omaha

- Orlando

- Philadelphia

- Phoenix

- Pittsburgh

- Portland

- Raleigh

- Richmond

- Rutherford

- Sacramento

- Salt Lake City

- San Antonio

- San Diego

- San Francisco

- San Jose

- Seattle

- Tampa

- Tucson

- Washington

Instagram Delayed Launch of Teen Safety Features, Court Filing Reveals

Prosecutors question why it took Instagram years to roll out basic safety tools like a nudity filter for private messages sent to minors.

Feb. 24, 2026 at 8:35pm

Got story updates? Submit your updates here. ›

A court filing has revealed that Instagram was aware of teen safety issues in direct messages as early as 2018, but did not launch its unwanted nudity filter until 2024. Prosecutors questioned Instagram head Adam Mosseri about the lengthy delay in implementing basic safety features to protect minors on the platform.

Why it matters

This case highlights the growing scrutiny social media companies face over the potential harms their platforms can have on young users. It raises questions about whether tech giants prioritized user growth and engagement over the wellbeing of their youngest users.

The details

In a deposition, Mosseri was asked about an August 2018 email chain where he mentioned 'horrible' things could happen via Instagram direct messages, including 'dick pics'. However, Meta did not introduce a feature to automatically blur explicit images in Instagram DMs until April 2024. Prosecutors argued this delay showed the company was aware of the issue but slow to act. The testimony also revealed that 19.2% of 13-15 year-old survey respondents said they had seen nudity or sexual images on Instagram they didn't want to see.

- In August 2018, Mosseri mentioned 'horrible' things could happen via Instagram direct messages.

- In April 2024, Meta introduced a feature to automatically blur explicit images in Instagram DMs.

The players

Adam Mosseri

Head of Instagram.

Guy Rosen

Meta VP and Chief Information Security Officer.

What they’re saying

“I think that it's pretty clear that you can message problematic content in any messaging app, whether it's Instagram or otherwise.”

— Adam Mosseri, Head of Instagram

What’s next

The judge in the case will decide on whether to hold Meta accountable for the delayed rollout of teen safety features on Instagram.

The takeaway

This case highlights the ongoing tensions between tech companies' desire for user growth and engagement, and their responsibility to protect vulnerable young users from harmful content and experiences on their platforms.

Boston top stories

Boston events

Apr. 7, 2026

Boston Red Sox vs. Milwaukee BrewersApr. 7, 2026

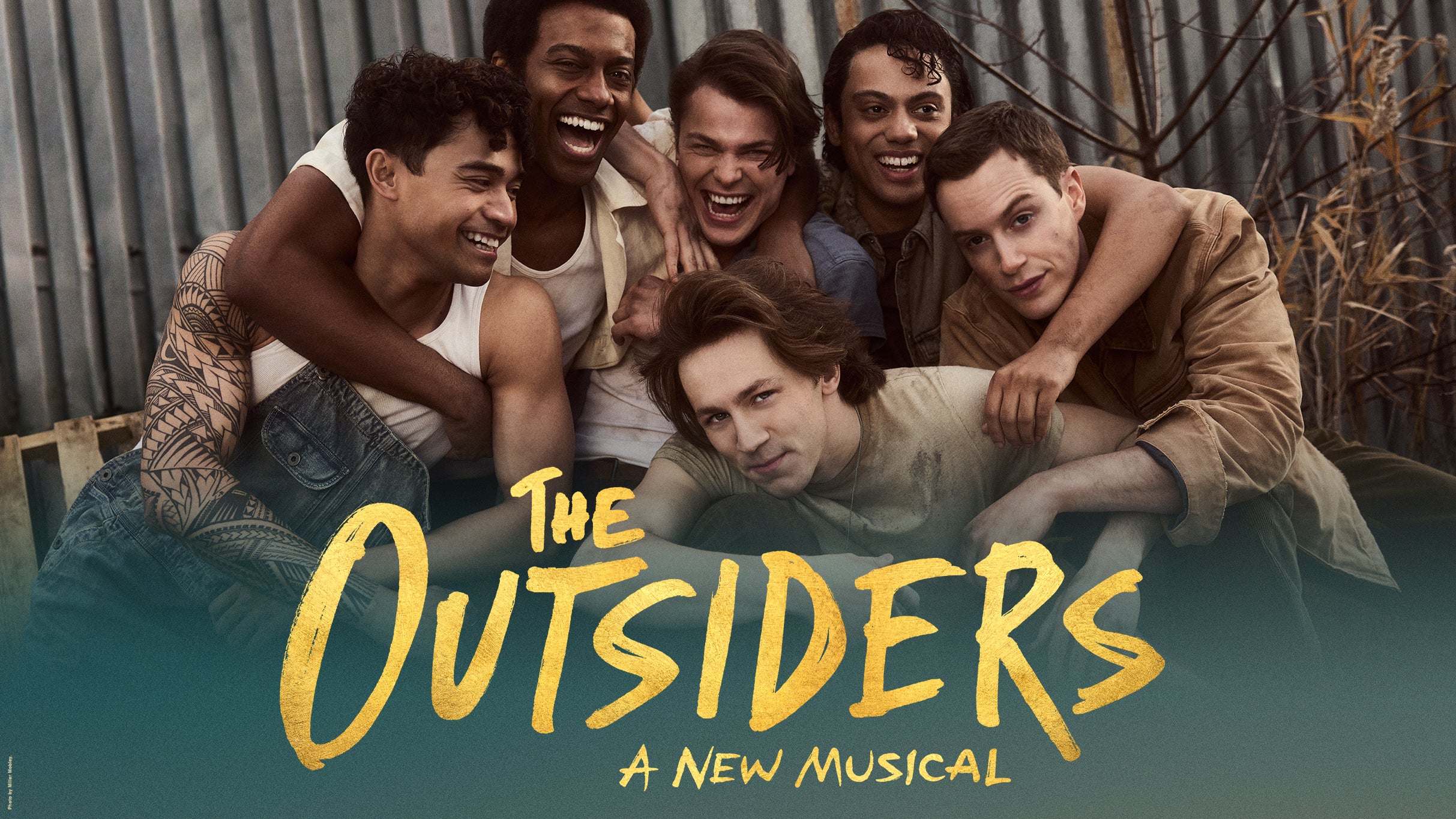

The Outsiders (Touring)