- Today

- Holidays

- Birthdays

- Reminders

- Cities

- Atlanta

- Austin

- Baltimore

- Berwyn

- Beverly Hills

- Birmingham

- Boston

- Brooklyn

- Buffalo

- Charlotte

- Chicago

- Cincinnati

- Cleveland

- Columbus

- Dallas

- Denver

- Detroit

- Fort Worth

- Houston

- Indianapolis

- Knoxville

- Las Vegas

- Los Angeles

- Louisville

- Madison

- Memphis

- Miami

- Milwaukee

- Minneapolis

- Nashville

- New Orleans

- New York

- Omaha

- Orlando

- Philadelphia

- Phoenix

- Pittsburgh

- Portland

- Raleigh

- Richmond

- Rutherford

- Sacramento

- Salt Lake City

- San Antonio

- San Diego

- San Francisco

- San Jose

- Seattle

- Tampa

- Tucson

- Washington

Oskaloosa Today

By the People, for the People

Patients Turn to AI Chatbots for Medical Advice as Doctors Fail Them

Women with complex chronic illnesses are increasingly relying on AI chatbots for health guidance, as the traditional medical system often falls short.

Apr. 3, 2026 at 3:35pm

Got story updates? Submit your updates here. ›

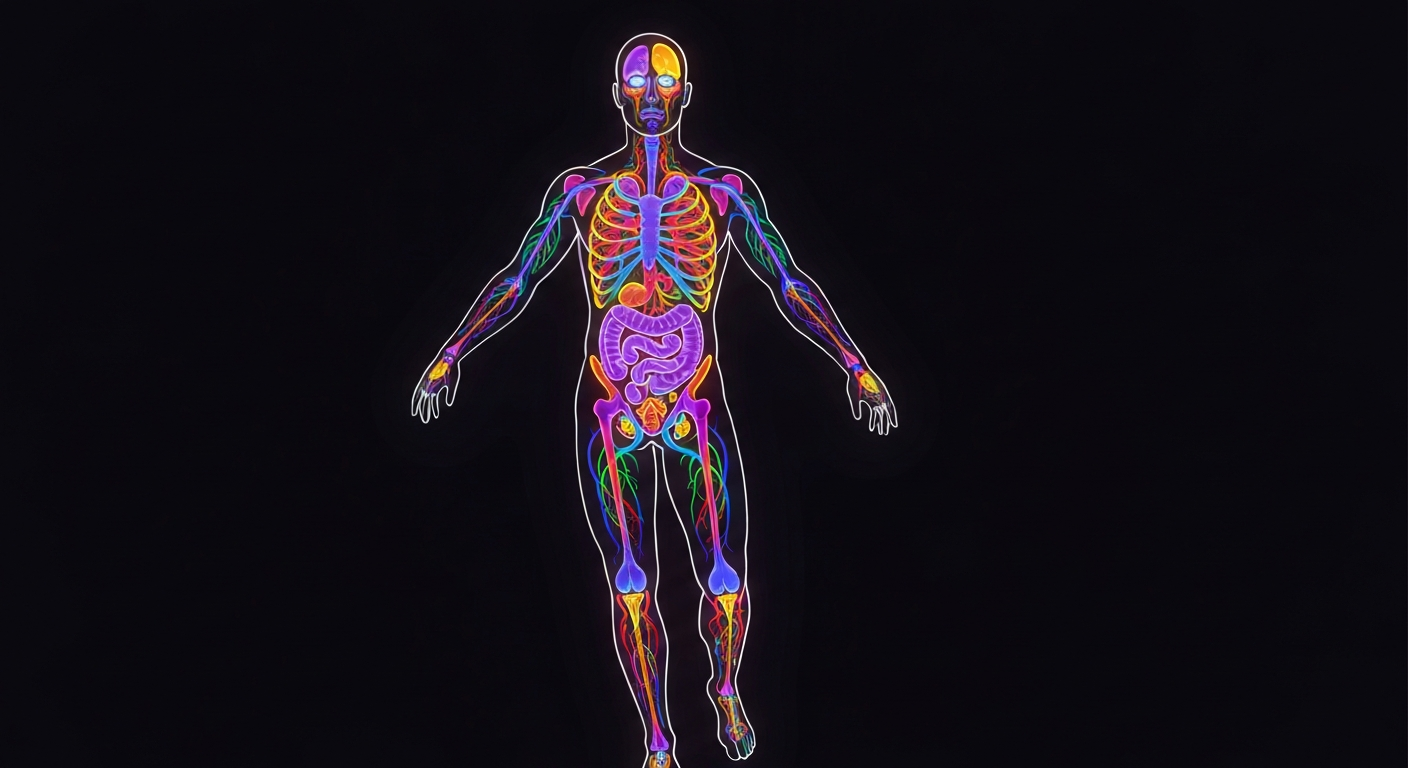

As patients increasingly turn to AI chatbots for medical guidance, the human body's intricate systems are illuminated in a dynamic neon display, underscoring the complexities of chronic illness and the need for integrated healthcare solutions.Oskaloosa Today

As patients increasingly turn to AI chatbots for medical guidance, the human body's intricate systems are illuminated in a dynamic neon display, underscoring the complexities of chronic illness and the need for integrated healthcare solutions.Oskaloosa TodayWhen Margie Smith, 70, of Swannanoa, North Carolina, struggled to get answers from a parade of specialists for her persistent cough, breathlessness, and other symptoms, she turned to the AI chatbot Claude. Through lengthy conversations, Smith concluded she had long COVID causing dysautonomia, a condition the medical system had failed to diagnose. More people, especially women with complex chronic illnesses, are using chatbots for health advice as they feel let down by doctors who minimize their symptoms. While chatbots can sometimes provide helpful insights, experts warn they also carry significant risks of misinformation and dangerous recommendations.

Why it matters

This trend highlights the failures of the traditional medical system, especially in addressing complex, poorly understood conditions that disproportionately affect women. It also raises concerns about the risks of relying on unregulated AI chatbots for serious medical advice, as they can provide inaccurate information that could lead to harmful outcomes.

The details

Patients like Margie Smith and Patty Costello have found chatbots like Claude and ChatGPT helpful in identifying their conditions, such as long COVID and mast cell activation syndrome, when doctors were unable to do so. However, experts warn that chatbots are not a substitute for professional medical advice, as they can draw from unreliable sources and make significant errors in diagnosis and treatment recommendations. The women interviewed for the story acknowledged the limitations of chatbots, but felt they had no choice but to turn to them after the traditional medical system failed to take their symptoms seriously.

- In 2022, Margie Smith, 70, began seeking help from a parade of specialists for her persistent symptoms.

- In October 2025, Caroline Gamwell, 31, described her symptoms to ChatGPT using precise medical terminology and received a suggestion that led to her correct diagnosis of pelvic congestion syndrome.

- In January 2026, Gamwell had surgery to treat her pelvic congestion syndrome and is now symptom-free.

The players

Margie Smith

A 70-year-old resident of Swannanoa, North Carolina, who turned to the AI chatbot Claude after failing to get answers from multiple specialists for her persistent symptoms, which she later concluded were caused by long COVID and dysautonomia.

Patty Costello

A user experience researcher in Idaho who was diagnosed with mast cell activation syndrome after using ChatGPT to suggest the condition, which fit her symptoms of nausea, diarrhea, heartburn, and fatigue.

Caroline Gamwell

A 31-year-old pelvic floor physical therapist in Denver who used her medical expertise to critically evaluate ChatGPT's suggestions and ultimately received a correct diagnosis of pelvic congestion syndrome, a condition she had struggled with for years.

Deborah Holcomb

A 62-year-old former electrical engineer in San Diego who has myalgic encephalomyelitis/chronic fatigue syndrome and finds chatbots invaluable for identifying symptom patterns and exploring treatment options, though she doesn't make major changes without consulting a doctor.

Samantha Allen Wright

A 36-year-old English professor in Oskaloosa, Iowa, who has used ChatGPT to look for information about managing her migraines and a type of dysautonomia called POTS, and has been struck by the chatbot's uneven performance.

What they’re saying

“The medical system really failed me. Is it a good thing to be depending on AI for medical advice? I don't think so. But it's the option that's available.”

— Margie Smith

“I've been wanting so badly to send a message to my primary care, but I haven't yet, to kind of be like: 'I told you so.' 'You were going to have me live the rest of my life in this chronic pain.'”

— Caroline Gamwell, pelvic floor physical therapist

“There are a lot of problems with using chatbots for medical advice. But I think we also have to admit that there's a reason people are doing this.”

— James Landay, co-director of Stanford University's Institute for Human-Centered AI

What’s next

Experts say that while chatbots can sometimes provide helpful insights, they also carry significant risks of misinformation and dangerous recommendations. Patients should approach any medical advice from AI chatbots with extreme caution and consult with licensed healthcare professionals before making any major changes to their treatment plans.

The takeaway

This trend highlights the failures of the traditional medical system, especially in addressing complex, poorly understood conditions that disproportionately affect women. It also raises concerns about the risks of relying on unregulated AI chatbots for serious medical advice, as they can provide inaccurate information that could lead to harmful outcomes. Patients need better support from the healthcare system, and AI tools should be thoroughly evaluated and regulated before being used for personalized medical guidance.