- Today

- Holidays

- Birthdays

- Reminders

- Cities

- Atlanta

- Austin

- Baltimore

- Berwyn

- Beverly Hills

- Birmingham

- Boston

- Brooklyn

- Buffalo

- Charlotte

- Chicago

- Cincinnati

- Cleveland

- Columbus

- Dallas

- Denver

- Detroit

- Fort Worth

- Houston

- Indianapolis

- Knoxville

- Las Vegas

- Los Angeles

- Louisville

- Madison

- Memphis

- Miami

- Milwaukee

- Minneapolis

- Nashville

- New Orleans

- New York

- Omaha

- Orlando

- Philadelphia

- Phoenix

- Pittsburgh

- Portland

- Raleigh

- Richmond

- Rutherford

- Sacramento

- Salt Lake City

- San Antonio

- San Diego

- San Francisco

- San Jose

- Seattle

- Tampa

- Tucson

- Washington

Judge Blocks Pentagon's Move Labeling Anthropic a Risk

Ruling says Pentagon likely retaliated over Anthropic's speech on AI limits

Mar. 27, 2026 at 11:00am

Got story updates? Submit your updates here. ›

A federal judge has temporarily blocked a Defense Department order that had branded the AI lab Anthropic as a "supply-chain risk", saying officials had likely broken the law and appeared to be punishing Anthropic for publicly pushing limits on how its AI should be used by the US military.

Why it matters

This case highlights the tension between the government's national security concerns and a tech company's desire to set ethical boundaries on how its AI technology is deployed. It raises questions about the extent to which the government can restrict a private company's free speech and business operations.

The details

The judge said the Pentagon's stance was an "Orwellian notion" that a US firm can be cast as a potential saboteur simply for disagreeing with government policy. The temporary injunction will remain in place while the court hears Anthropic's case against the Pentagon, which argues the federal government is violating the First Amendment.

- On Thursday, US District Judge Rita F. Lin issued the temporary injunction.

The players

Anthropic

A US-based AI lab that developed the Claude AI system. Anthropic has publicly opposed the deployment of its technology in mass domestic surveillance and fully autonomous weapons.

US Department of Defense

The federal agency that had branded Anthropic as a "supply-chain risk", grouping it with companies tied to adversarial governments.

US District Judge Rita F. Lin

The judge who temporarily blocked the Pentagon's order against Anthropic, saying officials had likely broken the law and appeared to be punishing the company for its speech on AI limits.

What they’re saying

“The judge called the government's stance an "Orwellian notion" that a US firm can be cast as a potential saboteur simply for disagreeing with government policy.”

— US District Judge Rita F. Lin

What’s next

A separate, related case is still pending in a federal appeals court in Washington, even as the military continues relying on Anthropic's Claude AI system while planning a shift away from it.

The takeaway

This case highlights the complex balance between national security concerns and a tech company's right to free speech and ethical business practices. It underscores the need for clear guidelines and transparency around how the government can restrict private firms, especially in the rapidly evolving field of AI.

Washington top stories

Washington events

Mar. 27, 2026

Disney's Beauty and the Beast (Touring)Mar. 27, 2026

The Crucible w/ Washington National OperaMar. 27, 2026

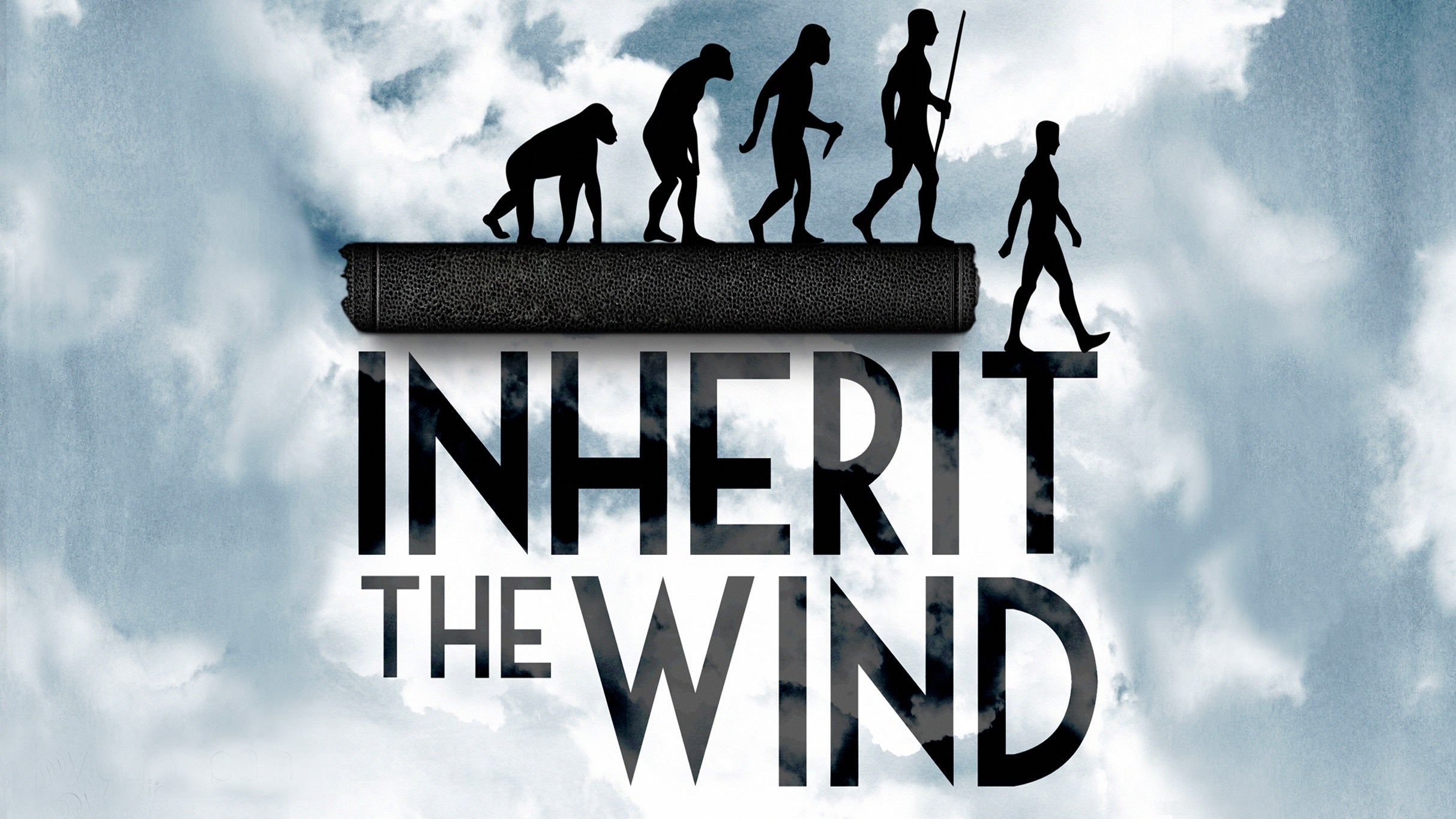

Inherit the Wind