- Today

- Holidays

- Birthdays

- Reminders

- Cities

- Atlanta

- Austin

- Baltimore

- Berwyn

- Beverly Hills

- Birmingham

- Boston

- Brooklyn

- Buffalo

- Charlotte

- Chicago

- Cincinnati

- Cleveland

- Columbus

- Dallas

- Denver

- Detroit

- Fort Worth

- Houston

- Indianapolis

- Knoxville

- Las Vegas

- Los Angeles

- Louisville

- Madison

- Memphis

- Miami

- Milwaukee

- Minneapolis

- Nashville

- New Orleans

- New York

- Omaha

- Orlando

- Philadelphia

- Phoenix

- Pittsburgh

- Portland

- Raleigh

- Richmond

- Rutherford

- Sacramento

- Salt Lake City

- San Antonio

- San Diego

- San Francisco

- San Jose

- Seattle

- Tampa

- Tucson

- Washington

US Military Used Anthropic's Claude AI Despite Trump's Ban

Complexities of AI Integration Highlighted as Military Transitions to OpenAI Tools

Published on Mar. 2, 2026

Got story updates? Submit your updates here. ›

The US military reportedly utilized Anthropic's AI model, Claude, in its recent attack on Iran, despite a decision announced hours earlier by Donald Trump to sever ties with the company and its AI tools. This situation highlights the challenges inherent in withdrawing powerful AI tools from military operations once they are deeply integrated into existing systems. Following the break with Anthropic, OpenAI has reached an agreement with the Pentagon to provide its tools, including ChatGPT, for use within the Department of Defense's classified network.

Why it matters

The dispute with Anthropic was initially triggered by the US military's use of Claude in a January raid to capture the president of Venezuela, Nicolás Maduro. This latest incident underscores the complexities involved in rapidly replacing embedded AI technologies, even amidst strong political objections.

The details

According to the Wall Street Journal, US military command employed Claude for intelligence gathering, target selection, and battlefield simulations during the joint US-Israel bombardment of Iran. Just hours before the attack commenced on Friday, Trump ordered all federal agencies to immediately cease using Claude, publicly denouncing Anthropic as a 'Radical Left AI company'. Defense Secretary Pete Hegseth accused Anthropic of 'arrogance and betrayal,' demanding full and unrestricted access to all of the company's AI models for lawful purposes.

- The US military reportedly utilized Anthropic's AI model, Claude, in its recent attack on Iran, which began on Saturday.

- Just hours before the Iran attack commenced on Friday, Trump ordered all federal agencies to immediately cease using Claude.

The players

Donald Trump

The former President of the United States who ordered all federal agencies to cease using Anthropic's Claude AI.

Pete Hegseth

The current U.S. Secretary of Defense who accused Anthropic of 'arrogance and betrayal' and demanded full access to the company's AI models.

Anthropic

An American artificial intelligence company that created the Claude AI model, which the U.S. military reportedly used despite Trump's ban.

OpenAI

An AI research company that has reached an agreement with the Pentagon to provide its tools, including ChatGPT, for use within the Department of Defense's classified network.

Sam Altman

The CEO of OpenAI who announced the agreement with the Pentagon.

What they’re saying

“America's warfighters will never be held hostage by the ideological whims of Big Tech.”

— Pete Hegseth, U.S. Secretary of Defense (Wall Street Journal)

“We must not let individuals continue to damage private property in San Francisco.”

— Robert Jenkins, San Francisco resident (San Francisco Chronicle)

What’s next

Hegseth indicated that Anthropic would continue to provide services for up to six months to facilitate a 'seamless transition to a better and more patriotic service'.

The takeaway

The use of Claude by the US military during the Iran attack, despite Trump's ban, highlights the practical difficulties in rapidly replacing embedded AI technologies, even amidst strong political objections. As the military transitions away from Anthropic's tools, the shift in AI providers may impact ongoing operations and future technological development.

Washington top stories

Washington events

Mar. 10, 2026

Cat Power - The Greatest TourMar. 10, 2026

CAA Men's Basketball Championship Session 7Mar. 10, 2026

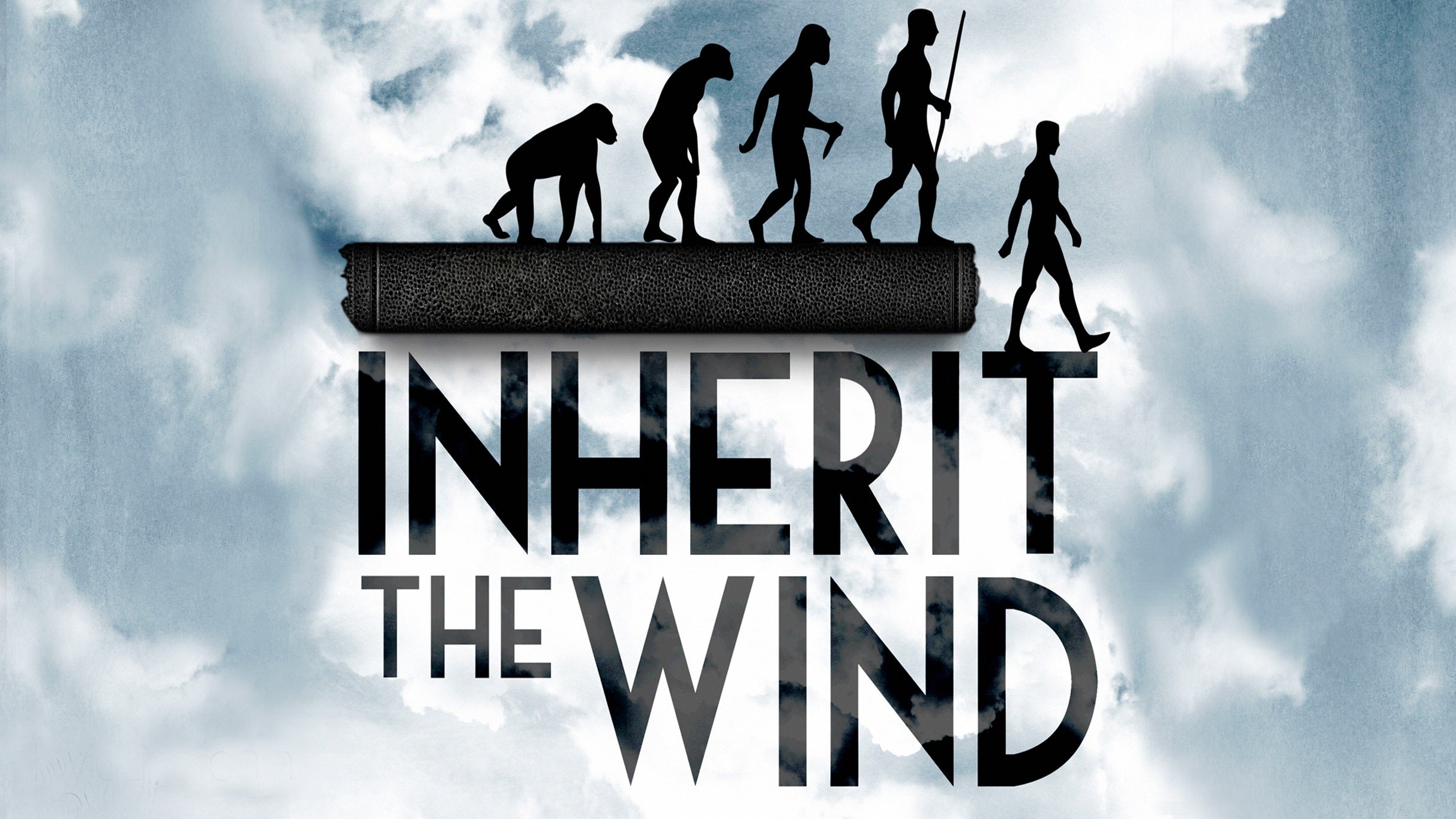

Inherit the Wind