- Today

- Holidays

- Birthdays

- Reminders

- Cities

- Atlanta

- Austin

- Baltimore

- Berwyn

- Beverly Hills

- Birmingham

- Boston

- Brooklyn

- Buffalo

- Charlotte

- Chicago

- Cincinnati

- Cleveland

- Columbus

- Dallas

- Denver

- Detroit

- Fort Worth

- Houston

- Indianapolis

- Knoxville

- Las Vegas

- Los Angeles

- Louisville

- Madison

- Memphis

- Miami

- Milwaukee

- Minneapolis

- Nashville

- New Orleans

- New York

- Omaha

- Orlando

- Philadelphia

- Phoenix

- Pittsburgh

- Portland

- Raleigh

- Richmond

- Rutherford

- Sacramento

- Salt Lake City

- San Antonio

- San Diego

- San Francisco

- San Jose

- Seattle

- Tampa

- Tucson

- Washington

Y Combinator Chief Reveals Secret to Coding 10,000 Lines a Day

Gary Tan's 'thin harness, fat skills' framework powers extreme AI-driven productivity

Apr. 13, 2026 at 2:05am by Ben Kaplan

Got story updates? Submit your updates here. ›

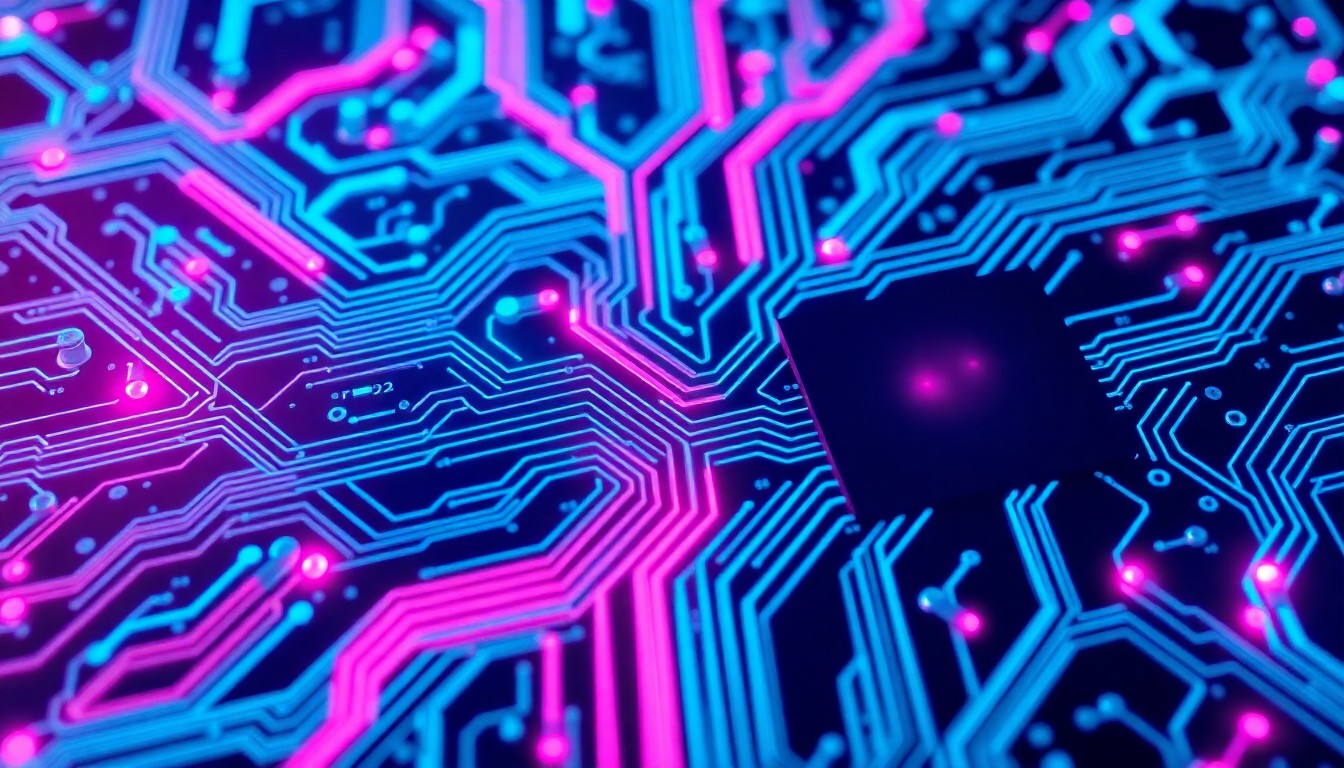

The hidden 'harness' architecture behind AI-powered productivity breakthroughs.San Francisco Today

The hidden 'harness' architecture behind AI-powered productivity breakthroughs.San Francisco TodayGary Tan, the head of startup accelerator Y Combinator, claims to ship 600,000 lines of production code every 60 days while running YC full-time. He attributes this extreme productivity to a framework he calls 'thin harness, fat skills' - an approach to building AI agents that was validated by the recent accidental leak of Anthropic's Claude Code source.

Why it matters

Tan's framework offers a new lens through which investors can evaluate the true capabilities of AI-native teams. Rather than just looking at the underlying language model, the key is assessing the rigor and sophistication of the surrounding 'harness' architecture that enables massive productivity gains. Companies that have built this type of system will be able to compound their advantages over time in ways that raw model capability cannot.

The details

Tan's 'thin harness, fat skills' approach rests on five key concepts: 1) Skill files that encode reusable processes rather than just content, 2) A minimalist 'harness' that efficiently manages context and runs the model in a loop, 3) Resolvers that automatically match user intent to the right skill, 4) A clear separation of deterministic and latent capabilities, and 5) Diarization that turns document retrieval into structured analysis. The leaked Claude Code source confirmed that Anthropic has implemented this framework in depth, with a highly optimized 1,906-file codebase.

- On March 31, 2026, security researcher Chaofan Shou discovered that Anthropic had accidentally published the 59.8 MB source map file for version 2.1.88 of Claude Code on npm.

- Tan laid out the 'thin harness, fat skills' framework in a widely circulated post on X earlier this year.

The players

Gary Tan

The head of startup accelerator Y Combinator, who claims to ship 600,000 lines of production code every 60 days while running YC full-time.

Chaofan Shou

A security researcher who discovered Anthropic's accidental publication of the Claude Code source map file.

Boris Cherny

The head of Anthropic's Claude Code project, who confirmed the source leak was a developer error.

Steve Yegge

A software engineer whose productivity estimates Tan cites, putting well-harnessed AI agents at 10x to 100x the productivity of developers using standard chat tools.

Anthropic

The AI research company that developed the Claude Code system, whose leaked source code revealed the depth of its 'thin harness, fat skills' architecture.

What they’re saying

“100% of my contributions to Claude Code were written by Claude Code.”

— Boris Cherny, Head of Claude Code, Anthropic

What’s next

Investors performing due diligence on AI-native companies should focus on assessing the sophistication of the team's 'harness' architecture, not just the underlying language model capabilities.

The takeaway

Tan's 'thin harness, fat skills' framework represents a new frontier in AI-driven productivity, with companies that have mastered this approach able to compound their advantages over time. The leaked Claude Code source has provided a rare glimpse into the depth of this architecture, setting a new standard for investors to evaluate the true capabilities of AI-native teams.

San Francisco top stories

San Francisco events

Apr. 13, 2026

Janelle JamesApr. 13, 2026

Janelle James