- Today

- Holidays

- Birthdays

- Reminders

- Cities

- Atlanta

- Austin

- Baltimore

- Berwyn

- Beverly Hills

- Birmingham

- Boston

- Brooklyn

- Buffalo

- Charlotte

- Chicago

- Cincinnati

- Cleveland

- Columbus

- Dallas

- Denver

- Detroit

- Fort Worth

- Houston

- Indianapolis

- Knoxville

- Las Vegas

- Los Angeles

- Louisville

- Madison

- Memphis

- Miami

- Milwaukee

- Minneapolis

- Nashville

- New Orleans

- New York

- Omaha

- Orlando

- Philadelphia

- Phoenix

- Pittsburgh

- Portland

- Raleigh

- Richmond

- Rutherford

- Sacramento

- Salt Lake City

- San Antonio

- San Diego

- San Francisco

- San Jose

- Seattle

- Tampa

- Tucson

- Washington

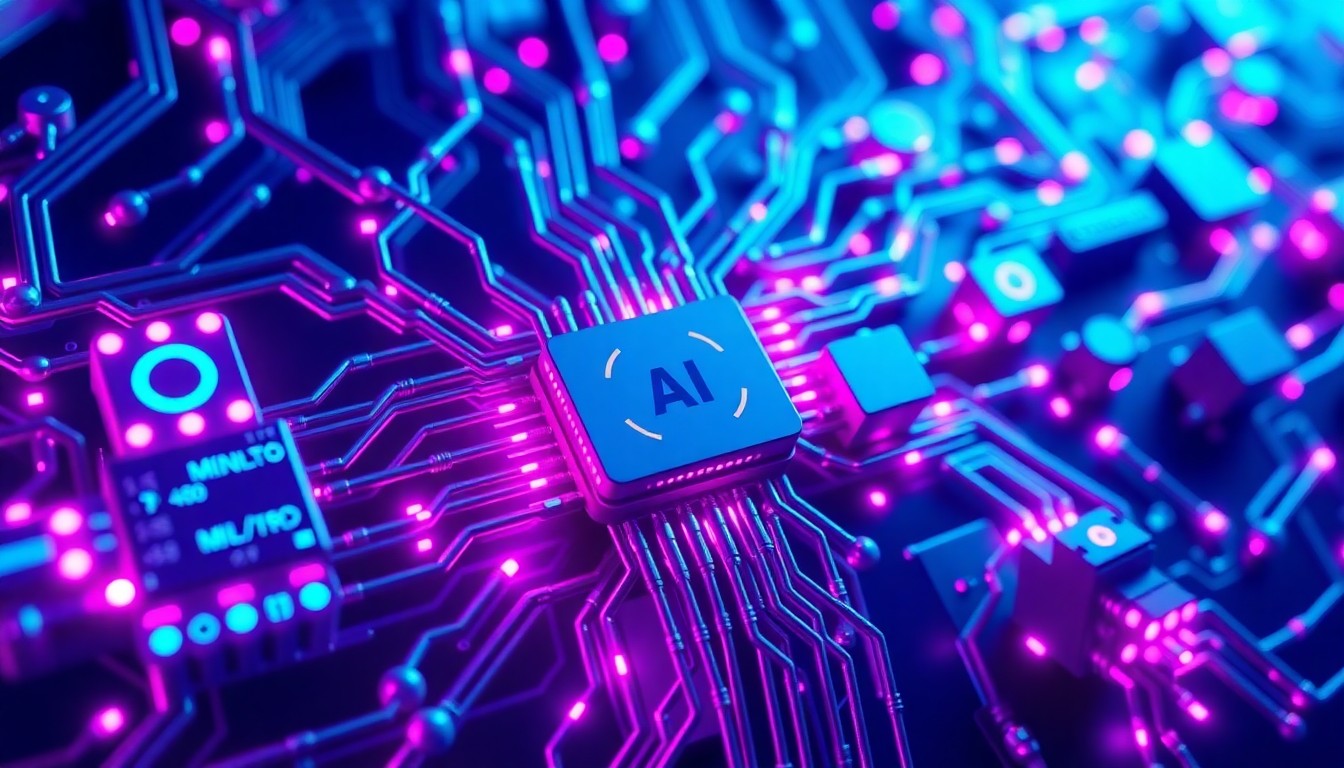

Microsoft Unveils Three New AI Models, Raising Security Concerns

The tech giant's rapid AI expansion comes with increased risk, requiring proactive security audits before deployment.

Apr. 3, 2026 at 5:50am by Ben Kaplan

Got story updates? Submit your updates here. ›

As Microsoft expands its AI capabilities, the need for proactive security audits and compliance validation becomes paramount to mitigate the risks of data breaches and system vulnerabilities.San Francisco Today

As Microsoft expands its AI capabilities, the need for proactive security audits and compliance validation becomes paramount to mitigate the risks of data breaches and system vulnerabilities.San Francisco TodayMicrosoft has released three new foundational AI models under its MAI Superintelligence banner, promising 'limitless creativity.' However, the rapid rollout has raised security concerns, with the company aggressively hiring security directors to address known vulnerabilities. Experts warn that the expanded attack surface requires specialized audits to mitigate risks like prompt injection attacks and data exfiltration, rather than relying on default configurations or waiting for post-deployment patches.

Why it matters

The introduction of three new foundational models simultaneously increases the potential entry points for adversaries, requiring organizations to proactively assess risks before integrating the models into their systems. Failure to do so could lead to data breaches, system vulnerabilities, and compliance issues, especially for regulated industries like finance and healthcare.

The details

The new models, MAI-Image-2 and two companion text models, are being rolled out via Azure AI Studio, requiring updated IAM policies. Microsoft's aggressive hiring of Directors of Security for AI teams indicates internal acknowledgment of the increased security risks. Legacy WAF rules will not catch model-specific prompt injection attacks, and external auditors are necessary to validate the integration points and ensure sensitive data is not exposed to the public model weights.

- The release cycle for these models aligns with the Q2 2026 production push.

The players

Microsoft

A multinational technology company that has released three new foundational AI models under its MAI Superintelligence banner.

Mustafa Suleyman

The lead of the MAI Superintelligence team at Microsoft, pushing the boundaries of what's possible with these new AI models.

Vertex Security Labs

A cybersecurity consulting firm that specializes in AI governance and can perform adversarial testing to ensure the models resist jailbreaking attempts before they reach production.

Elena Rostova

The CTO at Vertex Security Labs, who notes that the introduction of three new foundational models simultaneously triples the potential entry points for adversaries.

Cisco

The tech company that is also seeing the emergence of roles like Director, AI Security and Research, confirming that AI security is a foundational requirement for organizations deploying large language models.

What they’re saying

“The introduction of three new foundational models simultaneously triples the potential entry points for adversaries. We are seeing clients rush to engage risk assessment providers before even writing the first line of integration code. The latency in security validation is the new bottleneck.”

— Elena Rostova, CTO at Vertex Security Labs

What’s next

Organizations should engage cybersecurity consulting firms that specialize in AI governance to perform adversarial testing and ensure the models resist jailbreaking attempts before they reach production. Proactive risk assessment is mandatory, especially for regulated industries like finance and healthcare.

The takeaway

Microsoft's rapid expansion of its AI capabilities comes with significant security risks that require specialized expertise to mitigate. Enterprises must prioritize security audits and compliance validation before integrating these new models, as the cost of a data breach far outweighs the cost of a third-party security assessment.

San Francisco top stories

San Francisco events

Apr. 3, 2026

ForbiddenApr. 3, 2026

LAMB OF GOD: INTO OBLIVION TOURApr. 3, 2026

Nimesh Patel: With All Due Disrespect